Abstract

A lower and upper solution method is introduced for control problems related to abstract operator equations. The method is illustrated on a control problem for the Lotka–Volterra model with seasonal harvesting and applied to a control problem of cell evolution after bone marrow transplantation.

Authors

Lorand Gabriel Parajdi

Department of Mathematics West Virginia University P.O. Box 6201, Morgantown, WV 26506, USA e-mail: lorand.parajdi@mail.wvu.edu

Department of Mathematics Babes–Bolyai University M. Kogalniceanu, Cluj-Napoca, Romania

Radu Precup

Department of Mathematics Babes-Bolyai University, Cluj-Napoca, Romania

Tiberiu Popoviciu Institute of Numerical Analysis, Romanian Academy

Ioan-Stefan Haplea

Department of Internal Medicine Iuliu Hatieganu University of Medicine and Pharmacy Victor Babes Street, Cluj-Napoca, Romania

Keywords

control problem, lower and upper solutions, fixed point, approximation algorithm, numerical solution, medical application.

Paper coordinates

L.G. Parajdi, R. Precup, I.-S. Haplea, A method of lowert and upper solutions for control problems and application to a model of bone Marrow transplantation, Int. J. Appl. Math. Comput. Sci., 33 (2023) no. 3, 409–418, http://doi.org/10.34768/amcs-2023-0029

About this paper

Journal

Int. J. Appl. Math. Comput. Sci.

Publisher Name

Sciendo (Walter de Gruyter)

Print ISSN

1641876X

Online ISSN

google scholar link

Paper (preprint) in HTML form

Method of lower and upper solutions for control problems and application to a model of bone marrow transplantation

Abstract

In this paper, it is introduced the lower and upper solution method for control problems related to abstract operator equations. The method is illustrated on a control problem for the Lotka-Volterra model with seasonal harvesting and applied to a control problem of cell evolution after bone marrow transplantation.

Keywords: control problem, lower and upper solutions, fixed point, approximation algorithm, numerical solution, medical application

Subject Classification: 34H05, 34K35, 41A65, 65J15, 92C50

1 Introduction

In [3] we have introduced a controllability principle for a general control problem related to operator equations. It consists in finding a solution to the following system

| (1.1) |

involving the fixed point equation Here is the state variable, is the control variable, is the domain of the states, is the domain of controls and is the controllability domain given expression to a certain condition/property imposed to or to both and There are no structure imposed to the sets and and no properties for the mapping from to

We say that the equation is controllable in with respect to if problem (1.1) has a solution . In case that the solution is unique, we say that the equation is uniquely controllable.

In order to describe the solving of the general control problem, let us define the sets

Obviously, the solutions of the control problem (1.1) are the elements of

Consider now the set-valued map given by

Notice that gives us the expression of the control variable according to the state variable.

The next propositions is a general principle for solving the control problem (1.1).

Proposition 1

If for some extension of from to there exists a fixed point of the set-valued map

i.e.,

| (1.2) |

for some then the couple is a solution of the control problem (1.1).

Proof. From (1.2), one has Hence and so Then and from the definition of it follows that Thus is a solution of (1.1).

In many applications and are single-valued maps and can be extended to by simply using its very expression.

Two applications for a system modeling cell dynamics related to leukemia have been included in [3] (see also [5]) and three others in [6], from which two self-control problems. Many other illustrations of the above principle can be derived from the rich literature in control theory (see, e.g., the monograph [2]).

The aim of this note is to give an algorithm for approximation of the solutions of general control problems. It basically consists of a bisection method for the control variable inside an interval associated to a pair of lower and upper solutions. The notions of lower and upper solutions for a general control problem are defined accordingly.

2 Lower and upper method for control problems

First, we introduce in the present set-framework the notions of lower and upper solutions of a control problem. To this aim, we assume a certain partition of the solution domain

which allows us to say that the condition of controllability is targeted from the left or from the right. Thus, we can give the following definition.

Definition 2

By a lower (upper) solution of problem (1.1) we mean a pair ().

In contrast to the statement of the controllability principle from above, given in unstructured sets, from now on we shall assume a certain topological structure of the sets and accordingly the continuity of the map The topological framework is a natural one for approximation methods, when a certain meaning is required for the terms of approximant and of method convergence.

Assume that is a metric space, is a segment of a normed space with norm and that the sets are closed.

In addition, assume that the following conditions are satisfied:

- (h1)

-

The problem admits a lower solution of the form and an upper solution

- (h2)

-

For each there exists a unique with for

- (h3)

-

The map is continuous.

We use now the following bisection algorithm.

Algorithm 3

Let and For execute

Step 1: Calculate

and find

Step 2:

If we are finished and is a solution of the control problem; otherwise, take

then set and go to Step 1.

The algorithm leads either to a solution when it stops, or to two sequences and such that

- (i)

-

and

- (ii)

-

and

- (iii)

-

Then the sequences and are convergent and in virtue of (iii) their limits are equal, say Clearly, Furthermore, using the continuity of and the fact that are closed, from we obtain Similarly Hence that is solves the control problem and proves the convergence of the algorithm. Thus, we have proved the following theorem.

Theorem 4

Remark 5

Theorem 4 guarantees that the algorithm finishes after a finite number of iterations under a stop criterion of the form

for any given with Then any one of the pairs and where corresponds to the last iteration, can be considered an approximation of a solution to the control problem. The error for the control parameter is less than or equal to

Remark 6

The above result does not require any ordering between

Remark 7

For concrete applications, the computer implementation of the above algorithm needs in addition an approximation procedure for the solution operator Then the algorithm is in fact applied to an approximation of Such approximations can be done using the method of successive approximations (guaranteed by Banach and Perov fixed point theorems), Newton’s method, techniques of upper and lower solutions, variational principles, finite element methods, etc.

An example. Consider the Lotka-Volterra model with seasonal harvesting

| (2.1) |

where

Assume that for two routine harvesting policies and the ratio between the two species at the end of a season is below and respectively, above a desired level The control problem is to find the appropriate harvesting policy to achieve the ratio considered optimal. Clearly, under some given initial values the system (2.1) reads equivalently as a fixed point equation

| (2.2) |

Compared to the abstract control problem stated in Section 1, here where

Also, according to Definition 2, and (with the solution of system (2.2) for and ) are lower and upper solutions of the control problem, respectively.

Notice that it seems natural that the appropriate policy should be an intermediary between the two routine policies. Our algorithm looks for it as a convex combination of the two routine strategies and thus the control problem reduces to finding for which the solution of the system

with and some given initial values satisfy

3 Control of cell evolution after bone marrow transplantation

In the paper [10] (see also [7]) the following system has been introduced as a model of cell dynamics after bone marrow transplantation

| (3.1) |

Here stand for the normal, leukemic and donor cell populations at time after transplant time when their concentrations are supposed to have been Parameters stand for the growth rates of normal and leukemic cells; are their cell death rates; and are their microenvironment sensitivity rates. Also, the parameters stand for the intensity of anti-graft, anti-host and anti-leukemia effects, respectively. They totalize a large number of cell biophysical properties, as well as exterior stimulants and inhibition during the immunotherapy. Values of the parameters close to zero correspond to weak interactions, larger values quantify strong effects and their pre-transplant estimate would be crucial for transplant strategy (conditioning treatment, dose of infused cells and post-transplant immunosuppressive therapy). We note that the model was refined in [4] by considering instead of the same sensitivity rate for normal and leukemic cells, different sensitivity rates and , respectively.

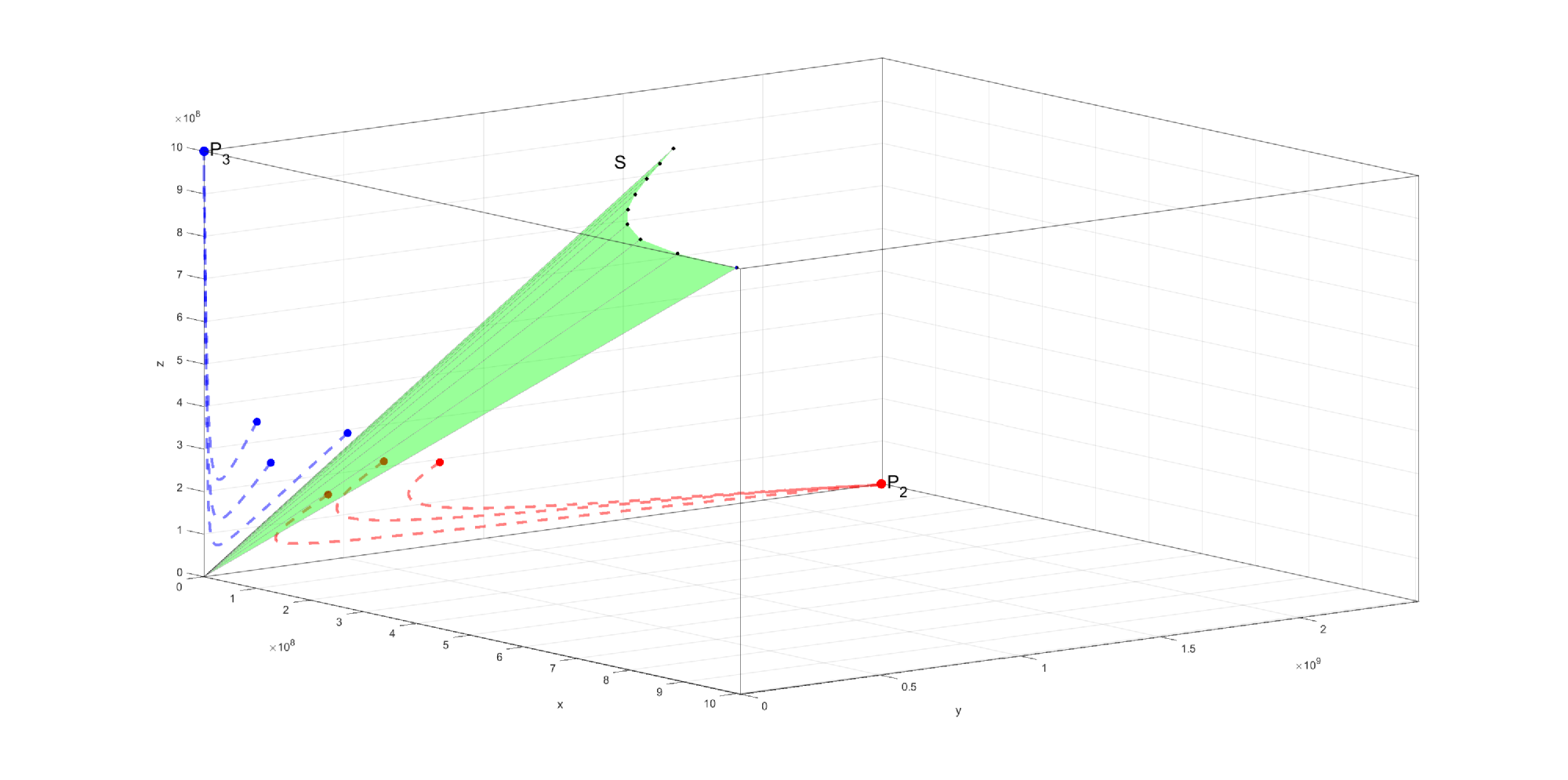

In paper [8], the equilibria of system (3.1) are found and their stability is established in terms of the system parameters. According to the main result in [8] the system has, as the numerical simulations in [9] suggested, only two asymptotically stable equilibria, namely the ”bad” equilibrium and the ”good” one Here the values

can be seen as homeostatic normal and cancer levels. Also, the octant of the positive states splits into two basins of attraction of the two equilibria, the ”bad” basin corresponding to and the ”good” one for (see Fig. 1 and the Appendix for its construction). This means that if at a given time the state belongs to the basin of attraction of any of the two equilibria, then the entire trajectory for remains in the same basin and, as a result, in time, approaches the corresponding equilibrium. Thus, a transplant appears as successful if the initial state is located in the good basin, which happens if is sufficiently large compared with and Also, an unsuccessful transplant could be turned into a successful one if by any methods/therapies one can move the state from the bad basin into the good one, or if we can enlarge the good basin to catch the state inside. In fact, for any transplant, one could preventively apply the same strategy in order to move the state (even if located in the good basin) away from the separating surface between the two basins, to be sure that further perturbations can not change the good evolution towards equilibrium

From the main result in [8] we have that the separation surface of the two basins of attraction intersects the plane after the line

and the plane after a curve closed to the line

Thus, the good basin of attraction increases if at least one of the two lines goes down, which happens if one can make or/and to decrease, or/and to increase.

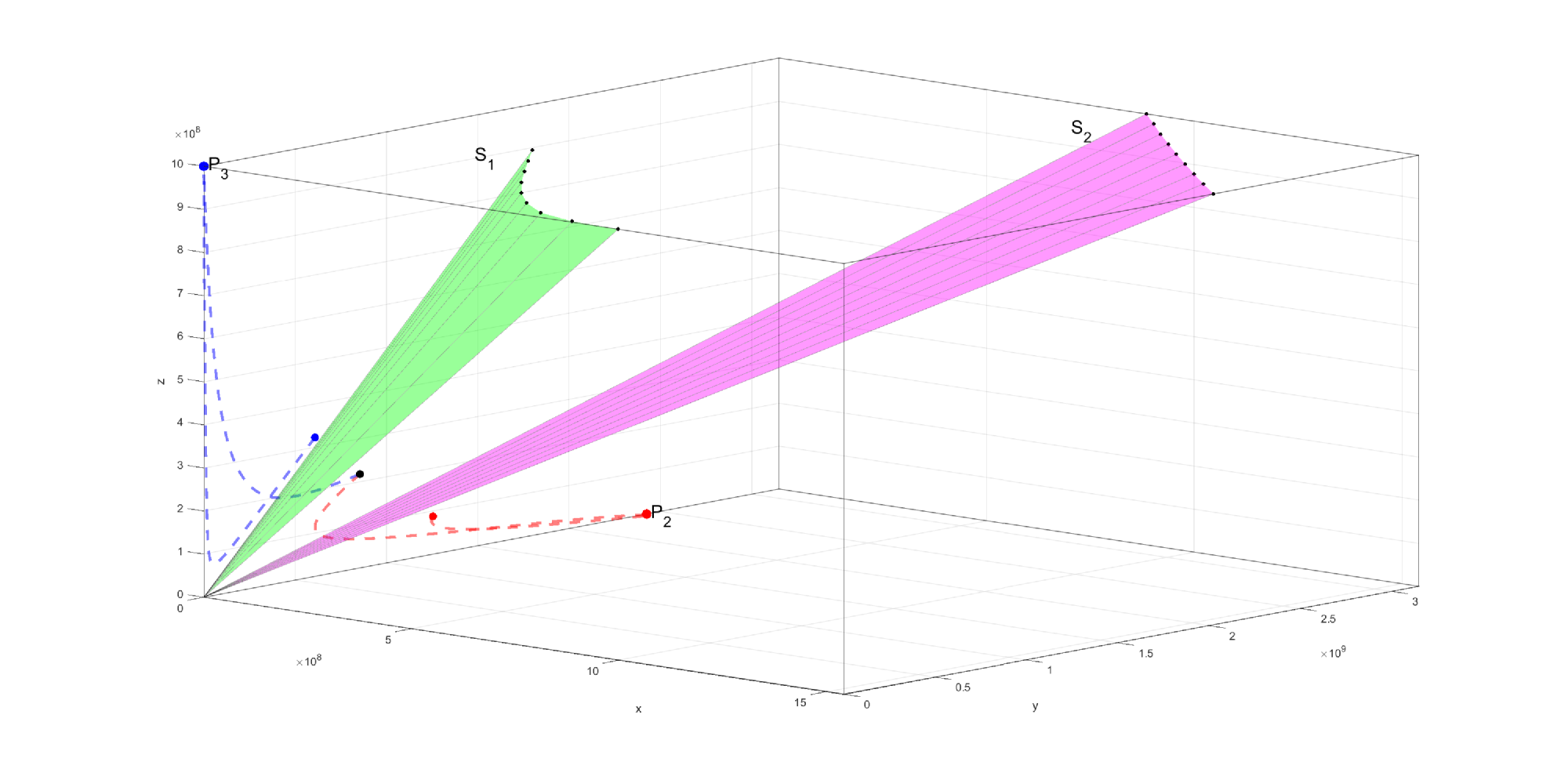

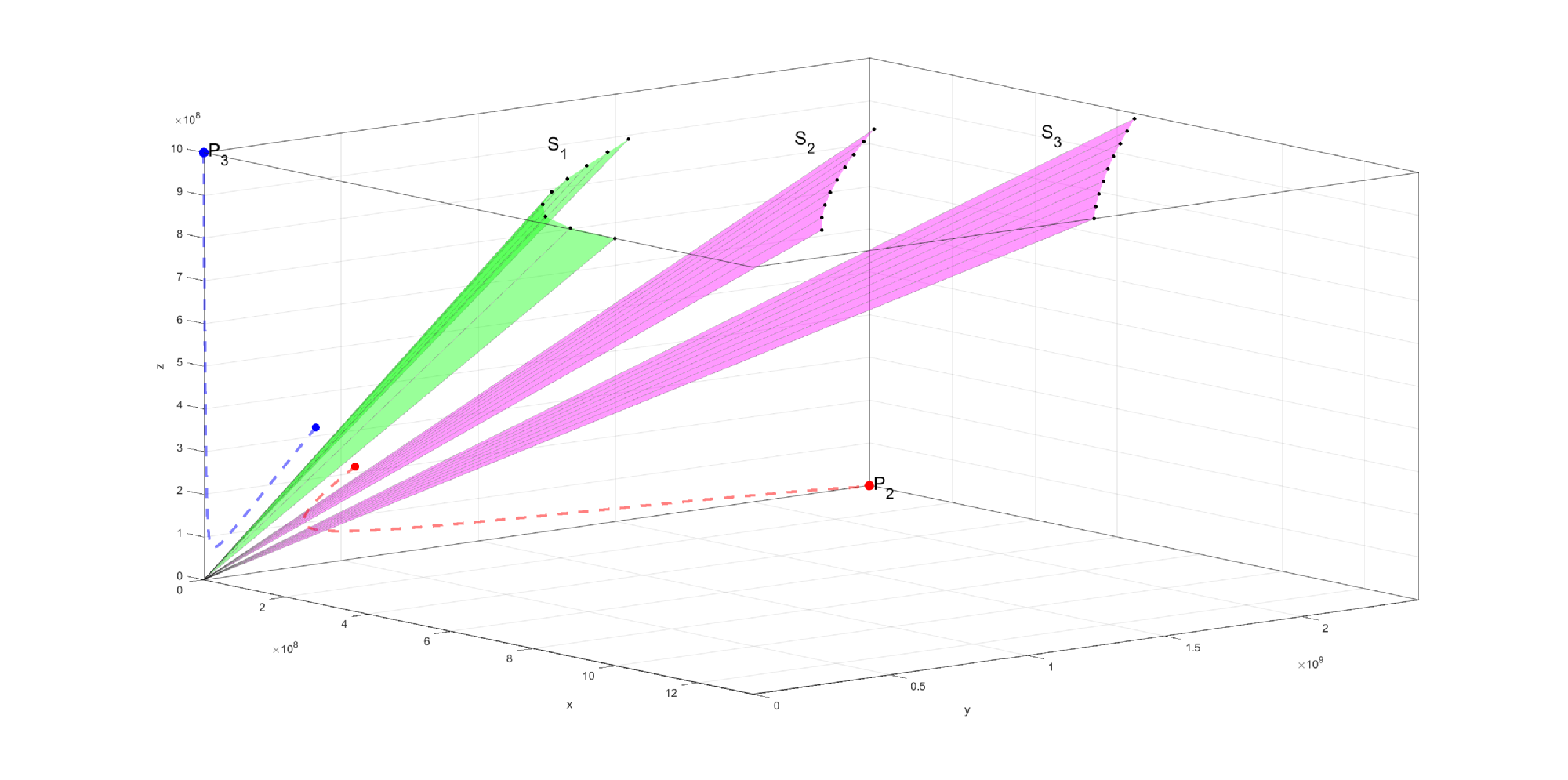

Therefore, the basin of attraction of the good equilibrium is enlarged if the separation surface goes down (see Fig. 2 and Fig. 3) and this can be achieved by

-

1.

decreasing of anti-graft parameter

-

2.

increasing of anti-host parameter

-

3.

increasing of anti-cancer parameter

-

4.

decreasing of growth rate of cancer cells

-

5.

increasing of death rate of cancer cells

Such changes in these parameters find their counterpart in post-transplant medical practice, namely the following therapies: immunosuppressive therapy (related to ), post-transplant consolidation chemotherapy (related to and ) and donor T-lymphocyte infusion (related to and ). In [9] a series of imaginary scenarios combining these therapies have been designed and it has been shown that success depends on their intensity, the time interval in which they are applied and the time after transplantation at which they are initiated.

Denote by the factors with which the parameters and are modified on a time interval and assume that as functions they belong to For example, we can consider these functions piecewise constant, when the value one on a certain subinterval would correspond to an interruption of the therapy. Also, denote by the vector in having these components.

Assuming that after transplant one has on the time interval and that the patient’s condition is in the bad basin, that is, we want to decrease the ratio to reach the goal

meaning that the patient’s condition is brought to time in the good basin, evolving after that to the good attractor . Obviously, the patient’s exposure to corrective therapies should be reduced as much as possible. In order to find such a minimal therapy according to we can apply our lower and upper solution method.

Clearly, for a lower solution we can take the vector which corresponds to the absence of any post-transplant therapy. Then

An upper solution can be chosen by checking several vector functions with step function components satisfying for and for For it one has

Once this upper solution is found, the algorithm starts and continues until the first step at which, for one has

for an acceptable margin Then the vector can be a good approximation for the control

In this case, referring to the general framework, we have where

Also is the integral operator associated to the equivalent integral system.

First, we prove that the solution operator is well-defined, that is condition (h2) holds.

Lemma 8

For each the initial value problem has a unique solution

Proof. First, note the Lipschitz continuity of the system nonlinearities with respect to the variables and Thus the qualitative theory on the Cauchy problem applies to our situation including the result about the behavior of the saturated solutions in a neighborhood of the boundary of the domain where the system is defined, here (see [1, Theorem 2.10]). Thus, it remains to prove that the saturated solution of the initial value problem does not fail at the boundary. Assume the contrary. Then there is such that in and the limit at of at least one of the three functions equals zero. Let as From the first equation of the system, we have

whence which leads to a contradiction with our assumption. Similarly if as The same if as when the contradiction comes from the estimate

Finally, we prove the continuous dependence on of the solution that is condition (h3).

Lemma 9

The solution operator is continuous from to

Proof. From the integral system, using the Lipschitz continuity of the nonlinearities and the Volterra property of the equations, we deduce for the estimates of the form

with respect to a Bielecki norm on and any number . Choosing sufficiently large, we have that the spectral radius of the matrix is subunitary. It follows that

This clearly shows the continuity on of and

Remark 10

Assuming that after transplant one has on the time interval we may apply similarly the algorithm in order to reach alternatively the goal

Acknowledgments:

LG Parajdi benefited from financial support through the project ”Development of advanced and applied research skills in the logic of STEAM+ Health”, POCU/993/6/13/153310, a project co-financed by the European Social Fund through the Human Capital Operational Program 2014-2020.

References

- [1] Barbu, V.: Differential Equations, Springer, Cham (2016). https://doi.org/10.1007/978-3-319-45261-6

- [2] Coron, J.-M.: Control and Nonlinearity, Mathematical Surveys and Monographs Vol. 136, Amer. Math. Soc., Providence (2007). https://doi.org/http://dx.doi.org/10.1090/surv/136

- [3] Haplea, I.Ş., Parajdi, L.G., Precup, R.: On the controllability of a system modeling cell dynamics related to leukemia, Symmetry 13(10), 1867 (2021). https://doi.org/10.3390/sym13101867

- [4] Parajdi, L.G.: Stability of the equilibria of a dynamic system modeling stem cell transplantation, Ric. Mat. 69(2), 579–601 (2020). https://doi.org/10.1007/s11587-019-00473-9

- [5] Parajdi, L.G., Patrulescu, F.O., Precup, R., Haplea, I.Ş.: Two numerical methods for solving a nonlinear system of integral equations of mixed Volterra-Fredholm type arising from a control problem related to leukemia, submitted.

- [6] Precup, R.: On some applications of the controllability principle for fixed point equations, Results Appl. Math. 13, 100236 (2022). https://doi.org/10.1016/j.rinam.2021.100236

- [7] Precup, R., Dima, D., Tomuleasa, C., Şerban, M.-A., Parajdi, L.-G.: Theoretical Models of Hematopoietic Cell Dynamics Related to Bone Marrow Transplantation, in Frontiers in Stem Cell and Regenerative Medicine Research. Volume 8, eds Atta-ur-Rahman, Shazia Anjum, Bentham Science, Sharjah, pp 202–241 (2018). https://doi.org/10.2174/9781681085890118080008

- [8] Precup, R., Şerban, M.-A., Trif, D.: Asymptotic stability for a model of cell dynamics after allogeneic bone marrow transplantation, Nonlinear Dyn. Syst. Theory 13(1), 79–92 (2013). https://www.e-ndst.kiev.ua/v13n1/7(42).pdf

- [9] Precup, R., Şerban, M.-A., Trif, D., Cucuianu, A.: A planning algorithm for correction therapies after allogeneic stem cell transplantation, J. Math. Model. Algor. 11(3), 309-323 (2012). https://doi.org/10.1007/s10852-012-9187-3

- [10] Precup, R., Trif, D., Şerban M.-A., Cucuianu, A.: A mathematical approach to cell dynamics before and after allogeneic bone marrow transplantation, Ann. Tiberiu Popoviciu Semin. Funct. Equ. Approx. Convexity 8, 167–175 (2010). http://atps.tucn.ro/pdf/8-2010-atps-abstracts.pdf

Appendix: A numerical method for constructing the separation

surface between the two basins of attraction

We aim to build numerically the surface that separates the basin of

attraction of the ”good” attractor (when the bone marrow transplant

succeeds) from the basin of the ”bad” attractor (when the transplant fails).

Let be the curve at the intersection between the surface and the

horizontal plane at . For numerical tractability, we

approximate the surface by a ruled surface which connects the origin of

the axes with the curve .

The surface intersects the plane approximately along a line

and the plane along the line

The above two lines intersect the plane in the points

respectively. We wish to compute the coordinates of points on the curve . To this end,

1. Let there be an equidistant partition of the segment , i.e. a series of points

where

and

such as the points divide the segment into equal parts. Equivalently, in vector notation:

Componentwise, we have

2. For each we compute the following: Let there be in the plane the normal on in and, on this normal, two series of equidistant points , spaced at from each other

that is the points are situated on the normal on on opposite sides of Vectorially

where is the unit vector orthogonal to that is

Componentwise, for all points we have

and respectively

To find an approximation of the surface we have employed the following

pseudocode algorithm (implemented in Matlab R2021a):

FOR each we test whether the point lies inside the good or the bad basin of attraction, by following the trajectory of the solution that starts from that point (integrating using the coordinates of the point as initial conditions).

IF the trajectory leads to the bad attractor, we test in sequence the orbits of the points , , until we reach the first point whose orbit lands on the good attractor. The midpoint of the segment determined by the last two tested points will belong, within an approximation, to the curve / surface .

IF the trajectory leads to the good attractor, we test in sequence the orbits of the points , , until we reach the first point whose orbit lands on the bad attractor. The midpoint of the segment determined by the last two tested points will belong, within an approximation, to the curve / surface .

END_FOR

Every point on the curve , as found above, is then connected with the origin. The resulting ruled surface is a rough but useful approximation of the surface , computable in linear time with respect to and .

Concerning the parameters for these simulations (see Fig. 1, Fig. 2 and Fig. 3), the following values are taken: where . In all the simulations we have used the following values for , , .

[1] Barbu, V. (2016). Differential Equations, Springer, Cham.

[2] Coron, J.-M. (2007). Control and Nonlinearity, Mathematical Surveys and Monographs, Vol. 136, American Mathematical Society, Providence.

[3] DeConde, R., Kim, P.S., Levy, D. and Lee, P.P. (2005). Post-transplantation dynamics of the immune response to chronic myelogenous leukemia, Journal of Theoretical Biology 236(1): 39–59.

[4] Foley, C. and Mackey, M.C. (2009). Dynamic hematological disease: A review, Journal of Mathematical Biology 58(1): 285–322.

[5] Haplea, I. ¸S., Parajdi, L.G. and Precup, R. (2021). On the controllability of a system modeling cell dynamics related to leukemia, Symmetry 13(10): 1867.

[6] Kelley, C.T. (1995). Iterative Methods for Linear and Nonlinear Equations, SIAM, Philadelphia.

[7] Kim, P.S., Lee, P.P. and Levy, D. (2007). Mini-transplants for chronic myelogenous leukemia: A modeling perspective, in I. Queinnec (Ed.), Biology and Control Theory: Current Challenges, Lecture Notes in Control and Information Sciences, Vol. 357, Springer, Berlin, pp. 3–20.

[8] Langtangen, H.P. and Mardal, K.A. (2019). Introduction to Numerical Methods for Variational Problems, Springer, Cham.

[9] Parajdi, L.G. (2020). Stability of the equilibria of a dynamic system modeling stem cell transplantation, Ricerche di Matematica 69(2): 579–601.

[10] Parajdi, L.G., Patrulescu, F.-O., Precup, R. and Haplea, I. ¸S. (2023). Two numerical methods for solving a nonlinear system of integral equations of mixed Volterra-Fredholm type arising from a control problem related to leukemia, Journal of Applied Analysis & Computation, DOI:10.11948/20220197, (online first).

[11] Precup, R. (2002). Methods in Nonlinear Integral Equations, Kluwer Academic Publishers, Dordrecht.

[12] Precup, R. (2022). On some applications of the controllability principle for fixed point equations, Results in Applied Mathematics 13: 100236.

[13] Precup, R., Dima, D., Tomuleasa, C., ¸Serban, M.-A. and Parajdi, L.-G. (2018). Theoretical models of hematopoietic cell dynamics related to bone marrow transplantation, in Atta-ur-Rahman and S. Anjum (Eds.), Frontiers in Stem Cell and Regenerative Medicine Research, Vol. 8, Bentham Science Publishers, Sharjah, pp. 202–241.

[14] Precup, R., ¸Serban, M.-A. and Trif, D. (2013). Asymptotic stability for a model of cell dynamics after allogeneic bone marrow transplantation, Nonlinear Dynamics and Systems Theory 13(1): 79–92.

[15] Precup, R., ¸Serban, M.-A., Trif, D. and Cucuianu, A. (2012). A planning algorithm for correction therapies after allogeneic stem cell transplantation, Journal of Mathematical Modelling and Algorithms 11(3): 309–323.

[16] Precup, R., Trif, D., ¸Serban, M.-A. and Cucuianu, A. (2010). A mathematical approach to cell dynamics before and after allogeneic bone marrow transplantation, Annals of the Tiberiu Popoviciu Seminar of Functional Equations, Approximation and Convexity 8: 167–175.

[17] Rahmani Doust, M.H. (2015). The efficiency of harvested factor: Lotka–Volterra predator-prey model, Caspian Journal of Mathematical Sciences 4(1): 51–59.